Code analysis prevents hidden security risks

| Stephen Morrow |

Quality

Security

Ensuring security in software means starting at the source code: Developers must build security in from the start. Organisations too often focus on repairing damage post-breach and fixing bugs after launch. Greater attention to security in the earlier stages of software development is needed. It would greatly reduce the percentage of successful attacks, and minimize damage when malicious hackers do succeed.

SQS and ICT Institute security benchmarking

Security testing after the software is ready is sometimes done in form of PEN-testing. This only reveals some potential issues and usually in a late stage. A much better way to increase application security is to build security in the code and testing (or verifying) security levels during the development of a system. ICT Institute and SQS (Software Quality Systems AG) have developed a method for analyzing systems with software source code and benchmarking these to form a calibrated scale for security levels. ICT Institute and SQS have scanned or tested 50+ systems, and did a full code review for 13 systems scanned in detail.

The results of this research project are frankly shocking and disturbing. In the 13 systems analysed in detail alone, there were 100.000 code level issues. 80.000 of these issues were directly related to security. The median number of findings per system are 1000 findings. For a hacker, making use of many of these findings does not require a lot of work and often it does not even require access to the software itself. With SQL injection, for example, a hack is simply placed by entering certain codes in a field on a web site through a normal Internet browser.

From the research, one can quickly conclude that the number of security issues in common current software is much higher than we expected. With over 1000 issues per system, there is significant testing and rework to be done before software can be considered to have an acceptable security level. What is an acceptable level of security, will obviously differ per system and per requirements of particular use. Our findings clearly indicate there is a huge amount of work to be done to make software itself more secure.

Security findings per category

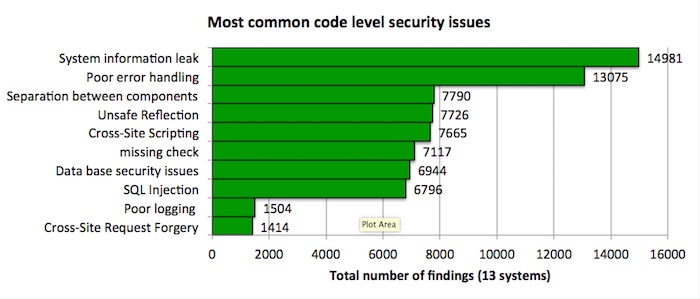

As part of the analysis effort, all issues are placed into categories. We do this not just to understand the root causes of issues, but also to estimate the time needed to fix the system. There are several well known categorisations such as the OWASP top 10. On the other hand there are source scanning tools such as Fortify that have dozens of categories. For this benchmark, 50 categories are used, since this provides the best trade-off between management insight and technical depth. Using these categories, one can determine quickly what type of vulnerabilities are most common. The top 10 most common categories (out of the total of 50 categories) are shown below.

The most common issue found is the ‘information leak’. This type of error leads to hackers learning information about the internal workings of applications. This is not a data breach by itself, but is often used by hackers to determine what systems to attack.

Recommendations

- Define a required security level before the start of the software development. The OWASP Top 10’s and the OWASP Application Verification Standard (ASVS) are a good place to star. More details are needed to make a good set of acceptance criteria or Definition of Done.

- Use design methods with security standards during the development of the software (such as SDLC). Integrating security in the software development process is more or less a required goal under ISO 27001. Using tools such as Visual Studio or other code quality tools is helpful if it is part of a well-designed process.

- Ask an independent party to do regular testing for security during the build phase (e.g. using the ICT Institute / SQS benchmarking service) and use the findings in your development to make immediate improvements.

Stephen is a specialist in secure application design, development and testing. He is responsible for leading SQS security testing practice and defining SQS security testing methodologies. SQS is a partner of ICT Institute.