Implementing ISO 42001: example audit report

| Pavlo Burda |

Artificial Intelligence

Templates

In our previous article on the AI Risk Management System, we explained how an AIMS can help organizations structure AI governance and support compliance efforts with the AI Act. Here we introduce our new template for auditing and structuring your AIMS and prepare for ISO 42001 certification.

What is the AIMS?

ISO 42001 requires organizations to build and maintain an AIMS. Like other ISO management systems, this is fundamentally a structured framework for managing risk, assigning responsibilities, documenting processes, monitoring performance, and improving over time. If you are familiar with ISO 27001, you can think of the AIMS as a management system for AI: it applies similar governance logic, but focuses specifically on AI systems, models, data, and use cases.

If I am ISO 42001 certified, am I compliant with the AI Act?

ISO 42001 certification does not by itself make an organization compliant with the EU AI Act. Legal and product-regulatory duties such as conformity assessment, CE marking, EU declaration of conformity, EU database registration, and incident reporting remain outside the scope of the standard. Still, a working AIMS helps structure the processes and evidence needed for many AI Act requirements, such as risk management, data governance, documentation, monitoring, and corrective action. That is why we recommend implementing an AIMS to support AI Act compliance.

AIMS implementation and audit template

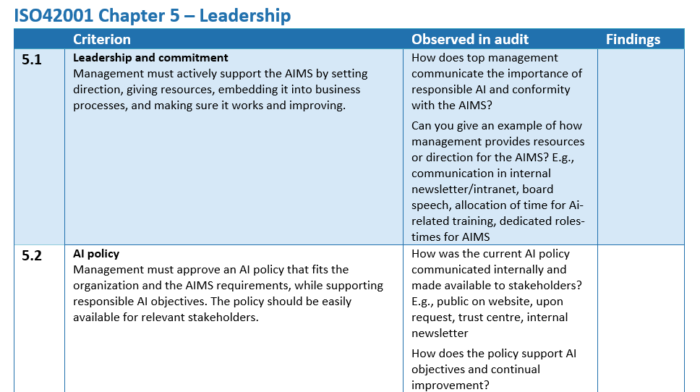

To build an effective AIMS and prepare for ISO 42001 certification, one should think directly in terms of the final deliverable: passing the audit of the AIMS. The AIMS will undergo an audit based on how convincing and functional is the organizational machine that manages the risks related to AI with the organization. Our AIMS audit template is designed for exactly that purpose: it guides organizations through building an effective AIMS, checking whether it is documented, implemented, maintained, and improved in practice. The neat part is that by following this approach, you undergo the internal audit process yourself and this prepares you for exactly critical audit phase. For example, see the typical steps necessary in 2-day audit of the AI management system in the picture below.

The template follows the full structure of ISO 42001. It covers the Harmonized Structure chapters 4 to 10, as well as the key Annex A controls, and turns them into practical audit questions, evidence prompts, audit planning sections, and finding categories such as observations, minor non-conformities, and non-conformities. It also includes space for audit scope, methodology, management summary, prior findings, core AIMS documents, and a two-day audit plan.

The template can be immediately used as an implementation roadmap. This is useful because many organizations struggle with the same practical question: where do we actually start? A management system standard can feel abstract when read clause by clause. By contrast, working through audit questions and expected evidence immediately translates the standard into concrete actions (see picture). It helps identify gaps early, define the documents and artefacts you need, assign responsibilities, and gradually build a system that is ready both for day-to-day governance and for certification review.

Key points

Context, Leadership and Risk management

Implementation starts with context and leadership. Management should define the scope, intended use, stakeholders, and internal responsibilities of the AIMS. Leadership is also bound to set the policy, objectives, and accountability for responsible AI use.

At the core of the system is risk management. The AIMS requires organizations to establish, implement, document, and maintain a risk management life cycle for AI, covering risks such as bias, unsafe outcomes, lack of transparency, poor oversight, misuse, and model drift. This aligns directly with Article 9 of the AI Act. ISO 42001 supports this through Chapters 6 and 8 and the relevant Annex A5 controls.

AI impact assessment

ISO 42001 explicitly requires organizations to assess the impact of AI systems on individuals, groups, and society in Clause 6.2 and the four dedicated Annex 5 controls. This is closely related to the AI Act requirement for deployers of high-risk AI to perform a Fundamental Rights Impact Assessment. The AIMS supports this by providing the governance and documentation structure needed to perform such assessments consistently.

Monitoring and human oversight

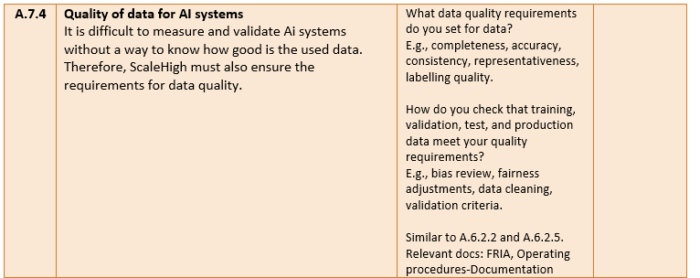

Monitoring and human oversight remain essential after deployment. Organizations should define what they monitor, how incidents are reviewed, how degradation or misuse is detected, and how human intervention works in practice. AI Act’s Article 14 requires meaningful human oversight, and several other articles require relevant incident recording and reporting. ISO 42001 supports this through Chapter 9 and controls on oversight, logging, traceability, and responsible AI objectives (A6.2.6, A6.2.8, A7.4 and Annex 9).

There are overall 38 controls in the Annex A of the standard. For each annex section, we provide concrete questions for you to answer and doing so you need to implement that control. We provide typical examples such as the relevant documentation and artefacts needed for that contorl. See, for instance, the FRIA and standard operating procedures doc in the pictures above.

Download and use the ISO 42001 audit report template here. You can read more about the AIMS in the AI Act Risk Management System article, and find other templates on our free templates page and GitHub.

Cover: Photo by ZHENYU LUO on Unsplash

Dr. Pavlo Burda is an IT consultant and researcher specializing in emerging cybersecurity threats and people analytics for security.