Measuring and monitoring your ISO 27001 ISMS

| Joost Krapels |

Security

Measuring and monitoring information security is required under clause 9.1 of ISO 27001. In this article we explain how to effectively monitor and measure your ISMS.

Why would you monitor and measure?

The goal of setting up a functioning Information Security Management System (ISMS) is to control risks to your organization. The full set of policies, procedures, tasks, meetings, and technologies protects against actions and events that could harm employees, clients, revenue, and even the existence of your organization. Keeping this defense in good working order requires planning, measuring, monitoring, and evaluation. After all, you wouldn’t step into an airplane that was built without schematics and is never inspected by aviation engineers: that is just asking for trouble.

The International Organization for Standardization, or ISO for short, is fully aware of this and made monitoring and measuring your ISMS mandatory for becoming ISO 27001 certified.

What does the standard say?

Chapter 9.1, officially called “Monitoring, measurement, analysis and evaluation“, requires you to document the following:

- The processes, controls, department, or ISMS topic you want to keep tabs on. These are the metrics.

- Exactly how you will monitor, measure, analyze, and evaluate

- When you will monitor and measure

- Who will monitor and measure

- When you will analyze and evaluate

- Who will evaluate

Below, we will describe these requirements in more detail. At the end of the article, we show how following all these steps could look like in practice.

a. What to monitor and measure

The first step is to determine your metrics: which indicators give you insight in the health and performance of your ISMS? A good place to start is your ISMS objectives, which you are required to create anyway due to ISO 27001 chapter 6.2. You can expand this list by going through your policy and procedure documents, and look for terms such as “we have a policy/procedure/process for XYZ” or “to protect/reduce the risk of XYZ, we do/perform/check ABC”. These sentences indicate that have a process in place or perform a specific action to protect information.

Monitoring and measuring look the same in an ISMS at first sight, but there is a subtle difference. Monitoring is observing data created during a process or by a system. Measuring, on the other hand, requires data to be collected through an action. You can, for example, monitor the availability of your website by checking the uptime percentage of your webserver using a dashboard, or measure the availability by counting how many server crash reports were created in your ticketing system.

Examples of metrics are:

- Percentage of project plans that contain information security requirements

- Percentage of personnel present during the most recent awareness training

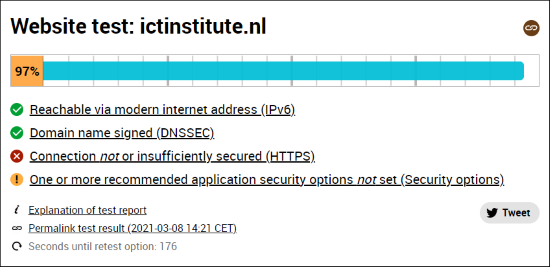

- Website security score on internet.nl

- Total amount of deviations from the access policy in the last month

- Amount of security incidents in the last twelve months

b. How to monitor, measure, analyze and evaluate

Only a rigid process can produce comparable results over time. For this reason, there should be no doubt how to perform the monitoring, measuring, analyzing, and evaluating of your ISMS health indicators. Should, for example, the number of security incidents in a month be counted, or should the number of security incidents resulting in damage higher than 1000 euro’s be counted? Another example is to run an internet.nl score check, to see whether you meet your goal percentage or should think about improving the score.

For analysis and evaluation, even the mathematics used can be important. Lets take, for example, your email spam filter. Do we aim for a high accuracy, or a high precision? With accuracy, we answer the question: “what percentage of emails is our spam filter correct on?“. With precision, we answer the question: “what percentage of emails we called spam is actually spam?“. If you want to minimize the chance of a spam email in the normal inbox, accuracy is a better metric to go for than precision. For this reason, you should double (or even triple) check whether your data collection and processing supports your information need.

c. When to monitor and measure

For both monitoring and measuring of ISMS health indicators, you should clearly document when this is done; when do you note down the results? This can be done by have a field ‘measurement frequency’ on your overview of indicators, and filling it in. Possible values are: once a year, once each quarter, once a month, once a week.

d. Who will monitor and measure

Even with a perfect plan what to measure and when this is done, no data is collected without a person appointed to execute the plan. Monitoring and measuring should be performed by someone familiar with the process or activity. For web server uptime data, this could be the lead developer. For personnel awareness training data, this could be an HR manager. In smaller organizations, it is likely that an information security team member will perform the monitoring and measuring.

e. When to analyze and evaluate

Analyzing and evaluating the collected data is usually done at larger intervals than the collection itself. Depending on the urgency to take action in case of a negative outcome, you can plan evaluation moments. If you keep track of the percentage of incidents closed on the total amount of incidents, you might want to evaluate this on a monthly basis in the already planned information security meeting. Less urgent indicators, such as the percentage of developers that received their annual specialized security training, can be evaluated once or twice a year. Analyzing takes place before evaluation, so make sure to plan appropriate time between data collection and evaluation.

f. Who will analyze and evaluate

Evaluating the performance on an indicator is usually done by management responsible for that topic/department. While the board and CEO are ultimately responsible for the whole ISMS, they might not have enough knowledge to evaluate the results on all ISMS performance indicators. The CTO or CISO is usually the right person for technical metrics, while HR and other organizational metrics are likely to fall under the COO’s responsibility. Analysis can, just like monitoring and measuring, be done by anyone with enough knowledge on the topic at hand.

Putting it all together

When all six steps are followed for one topic, the metric is complete. In practice, these six steps are usually less strictly separated than in theory. Below, we wrote two practical metric example cases:

Case 1: clean desk and clear screen

Unauthorized information disclosure is not bound to the digital realm. If a computer or document on a desk is left unattended and can be accessed, there is a risk that information will be extracted from them. For this reason, many organizations put in place a Clean desk and Clear screen policy: no information may be left unattended on desks, and computers must be locked before you walk away from them. Measuring and monitoring of this policy could be documented as follows:

Step 1: Describe the metric

The amount of unattended desks with a logged in computer/tablet or physical information assets classified “internal” or higher. Every instance is a Strike.

Step 2: Collect the data

One week before the bi-annual Operations Security meeting, on a Monday during lunch time, the Security Officer walks through the office and counts the amount of Strikes and which employee is responsible for this. They take a picture of the situation, and make a note of the situation.

Step 3: Analyze the data

The Security Officer adds the newly collected data to the Clean desk register in a new column. They count the amount of Strikes identified during the most recent data collection, and the total amount of Strikes for every employee.

Step 4: Evaluate

In the bi-annual Operations Security meeting, the CISO, Security Officer and Operations Manager discuss the Strikes. If there are more than five new Strikes, the team will plan a company wide meeting to address the issue. Employees that “earned” their first strike will receive a verbal warning, while the second strike warrants a written warning.

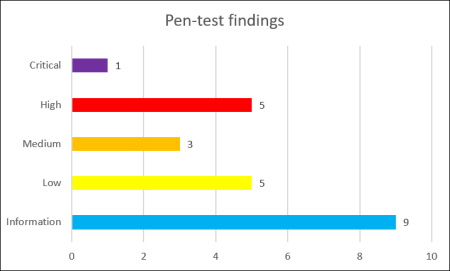

Case 2: Pen-test findings

For the second example, we focus on software security. Your IT landscape should be protected against attacks, and a good way to verify this is to perform an annual pen-test. How quickly the findings from pen-tests are resolved reflects how well your security vulnerability patch process works.

Step 1: Describe the metric

The amount of time in days/weeks/months it takes to solve all pen-test findings.

Step 2: Collect the data

One week before the quarterly InfoSec management meeting, the CTO creates a list of pen-test findings and whether the time to fix was below the threshold (pass) or above (fail). The threshold for fixing pen-test findings is: <1 day for critical, <2 weeks for high, <1 month for medium, <2 months for low, <1 year for informational.

Step 3: Analyze the data

The CTO determines the root cause of the non-fixed findings, and calculates the percentage of fixed and not fixed items.

Step 4: Evaluate

In the quarterly InfoSec management meeting, by CISO, CTO, and Security Officer. The team discusses how to address the identified root causes.

Image credit: @nci via Unsplash

Joost Krapels has completed his BSc. Artificial Intelligence and MSc. Information Sciences at the VU Amsterdam. Within ICT Institute, Joost provides IT advice to clients, advises clients on Security and Privacy, and further develops our internal tools and templates.